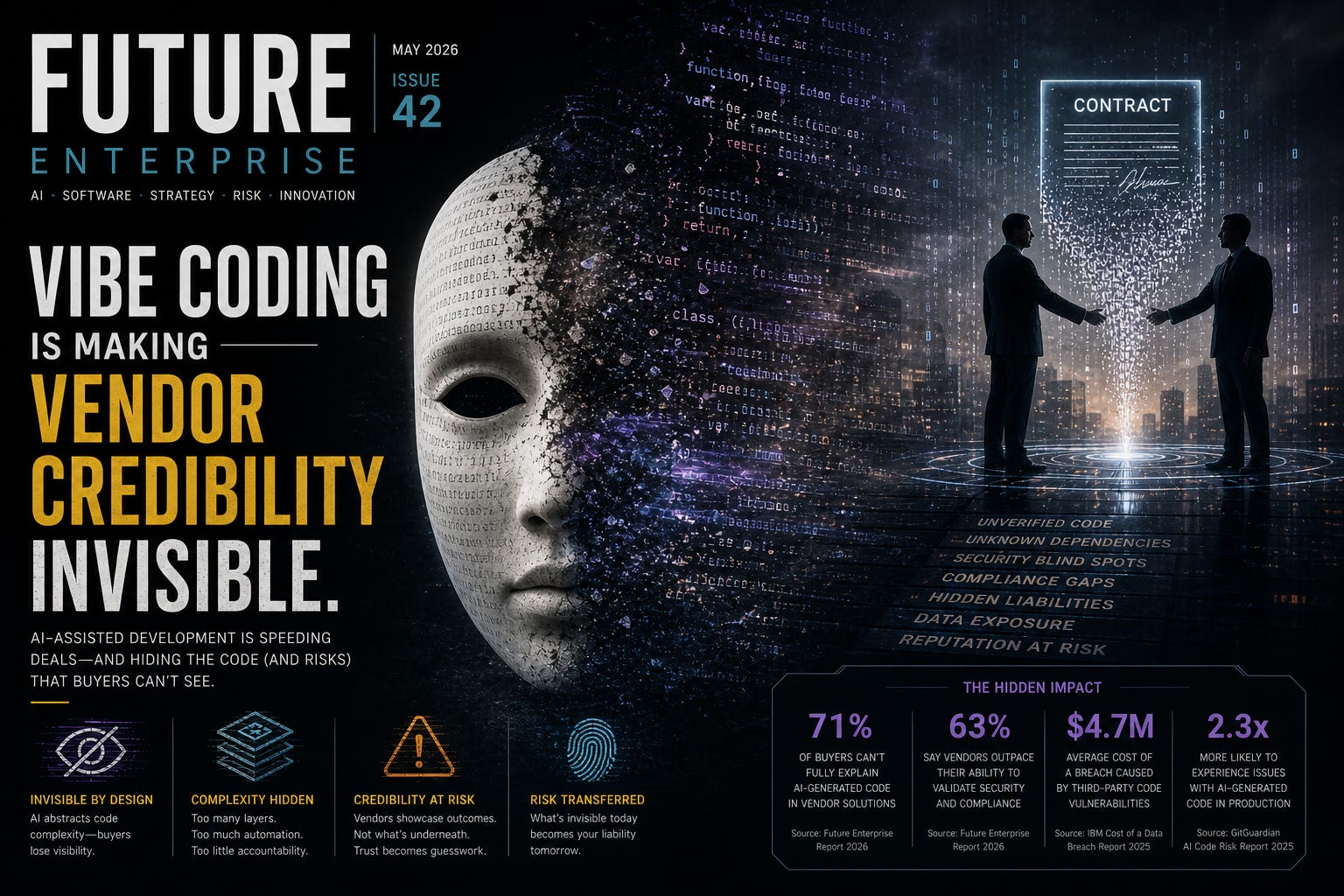

Vibe Coding Is Making Vendor Credibility Invisible

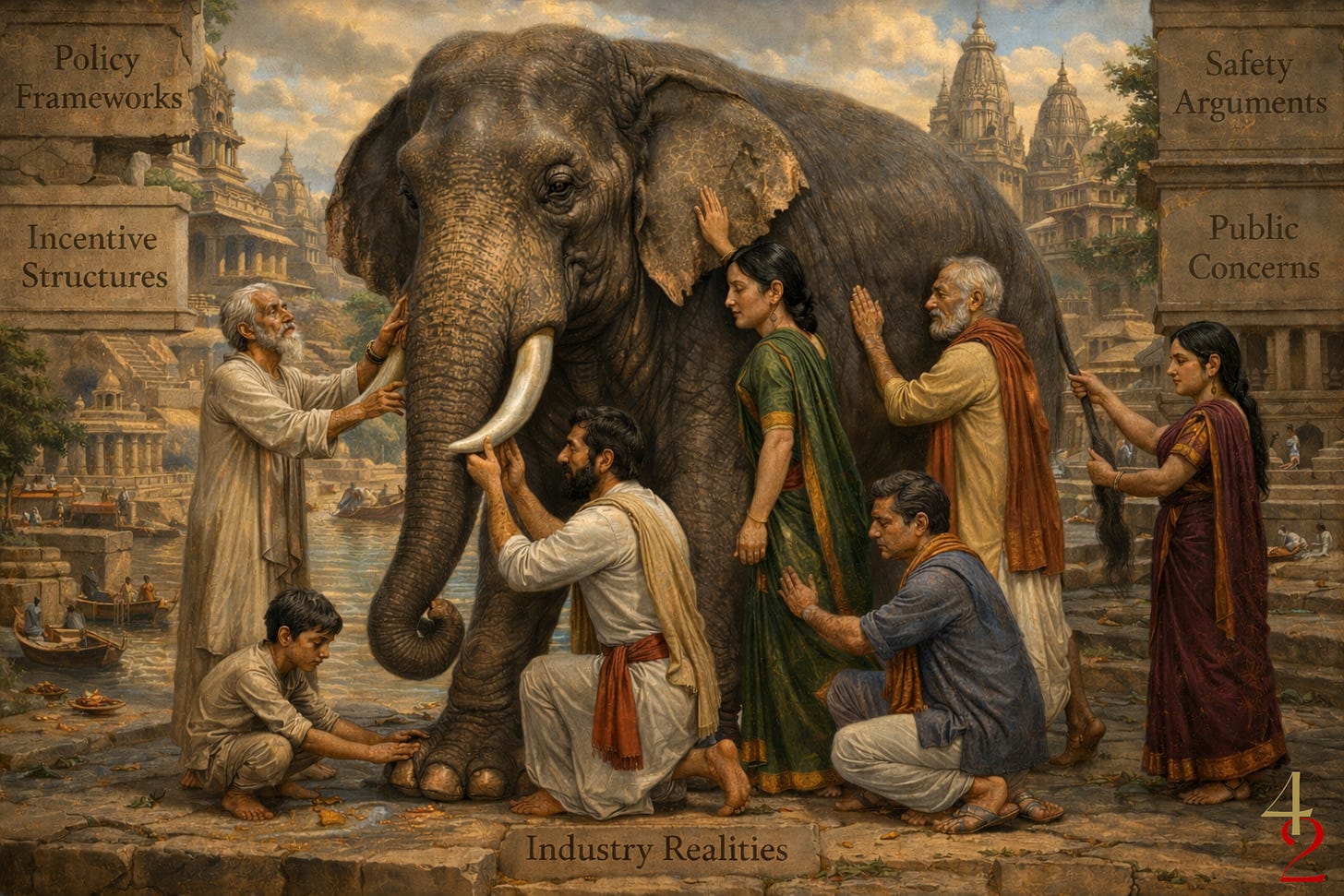

Over the last few months, I’ve been knee‑deep in AI governance: helping organisations translate glossy policy decks into actual operating models. What I keep seeing is the “blind men and the elephant” effect—everyone is touching a different part of the beast and calling it “AI governance.”

Ethicists feel the ‘tail’ of fairness and bias. Security teams feel the attack‑surface ‘leg’. Compliance teams feel the regulatory ‘trunk’. Product leaders feel the velocity‑driven ‘ear’. All of them are right at the local level, and all of them are wrong if they think their piece is the whole system.

The irony is that our ever‑obedient LLMs are quietly encouraging this fragmentation. Ask an LLM for “AI governance”, and you get a polished, coherent document that feels comprehensive but is often only one slice of the full picture.

And now, because the bar to production is so low, some teams go one step further: they vibe‑code their partial understanding into a working software solution and call it done. That is where the real danger starts, especially when those vibe‑coded tools are not just built internally but procured from vendors.

The blind men and the AI‑governance elephant

In the classic parable, each blind man feels a different part of the elephant—ear, trunk, leg, tail—and insists his description is the whole truth. The same thing is happening in AI governance: every function is describing the beast in terms that make sense from their own vantage point.

The result is what some analysts call the “governance mirage”: 72% of enterprises believe they have adequate AI governance, yet most lack a single owner, clear accountability, or measurable control over AI systems in production.

The gap is not between “good policy” and “bad policy.” It is between policy‑tic gestures and governance that is actually woven into data pipelines, prompts, models, and code.

The rise of vibe coding as a governance‑in‑practice trap

Vibe coding is the practice of expressing intent in plain speech and letting an AI agent handle the heavy lifting across editors, terminals, and browsers. The human no longer writes every line; they guide, review, and ship.

This is where AI governance and software procurement collide:

A product owner can describe a “governance‑lite” feature in prose and have an AI agent turn it into a working dashboard.

A sales leader can take that demo and march into a board meeting claiming she is “operationalising governance,” even if the underlying logic is probabilistic, brittle, and undocumented.

One I saw recently even floated the idea of filing a patent on a vibe‑coded governance tool, while the live demo quietly took me to “base‑44” instead of a serious architecture review.

In other words, the bar to looking like you have governance is now lower than the bar to actually governing.

The craftsmanship collapses inside vibe‑coded systems

The deeper problem is not just the sales pitch. It is what’s under the hood. Evidence from longitudinal code‑base studies between 2020 and 2024 shows a measurable decline in software craftsmanship.

Refactoring, the practice of cleaning up code without changing behaviour, has collapsed from about 25% of code changes to less than 10% in AI‑heavy environments.

Code duplication has exploded, as AI tools prefer adding new lines over optimising existing ones, leading to a “big ball of mud” that is expensive to maintain.

Security vulnerabilities in AI‑generated code are found to be about 2.74 times more frequent than in human‑authored code, with common failure points like improper password handling and unrestricted file uploads.

These metrics are not just engineering footnotes; they are procurement signals. A vendor who ships features quickly through vibe coding may be delivering a system that is cheap to build but prohibitively expensive to maintain or extend.

The credibility crisis in procured, vibe‑coded software

When an organisation buys vibe‑coded software rather than building it internally or from a vendor that understands software development principles, the risk profile shifts sharply. The vendor is no longer just a builder; they become the interpreter between the system and the buyer’s governance model.

But interpretation is hard when:

Ownership is ambiguous. Much of the code is generated by an AI, stitched together from patterns in the training data. The vendor may not fully understand the underlying logic or the specific dependencies that the model injects.

Maintenance is shallow. If the original engineer or vendor departs, their successors often inherit an orphaned codebase with minimal documentation, an inconsistent architecture, and extensive code duplication. Unlike traditional SaaS vendors with centralised support structures, vibe‑coded tools can lack a clear owner for patching, updating, or retirement.

Failure is opaque. AI‑generated systems often suppress errors, use broad

try‑exceptblocks, and return “success” when the task is incomplete or subtly wrong. This is why some experts stress the need for failure truthfulness—the property that the system’s observable outputs accurately represent its internal understanding of success or failure.

In practice, this means a vendor can ship a beautifully working demo, collect a contract, and then quietly retreat into “vibe debugging”: feeding error logs back into an AI, applying the suggested fixes, and declaring things resolved without ever understanding the root cause.

The AIRA‑style lens: demanding truthfulness in code

Simply asking for “secure” or “resilient” code is no longer enough. Organisations need to audit whether a codebase is truthful about its own state. This is where an AIRA‑style “failure truthfulness” framework becomes critical.

The core idea is straightforward: if the system is going to look good all the time, it had better be good in every measurable way. Some of the most telling checks are:

C01: Success Integrity – “Success” is only returned if the task is actually complete. No silent failures masked as “True.”

C03: Exception Suppression – broad

try‑exceptblocks are not used to swallow errors and make the UI appear healthy.C06: Ambiguous Contracts –

NoneorNullis not used to hide failure states that downstream systems cannot distinguish from legitimate empty data.C07: Parallel Logic Drift – semantic inconsistencies between different code paths are minimised, so the system does not behave unpredictably when switching features.

C11: Deterministic Drift – decision paths are kept as deterministic as possible, so identical inputs do not produce different results across sessions.

C13: Confidence Misrepresentation – degraded outputs are presented with an appropriate confidence posture, so users do not rely on low‑quality AI output as if it were factual.

C15: Idempotency Drift – retries do not cause duplicate data entries, which is critical for financial or record‑keeping systems.

A vendor who fails these checks is prioritising the vibe of a working product over actual technical reliability. Procurement teams should demand an AIRA‑style audit report for any business‑critical, vibe‑coded software and treat high failure rates—especially in exception handling and success integrity—as a red‑flag signal.

New vetting metrics for AI‑driven procurement

Organisations can no longer rely on static security checklists. They need dynamic, evidence‑based metrics that reflect the probabilistic nature of AI‑driven systems.

Useful metrics include:

Mean Time to Repair (MTTR) vs. Mean Time to Identify (MTTI): A low MTTR is only credible if MTTI is also low. If the vendor can fix bugs quickly but cannot explain why the fix worked, you are buying vibe debugging, not governed engineering.

Mean Time Between Failures (MTBF): A high MTBF indicates a stable system, but it must be balanced against Mean Time to Acknowledge (MTTA)—the time it takes human teams to notice and respond to failures.

Mean Time to Failure (MTTF) for non‑repairable components: AI‑generated dependencies can have hidden “end‑of‑life” points or brittle assumptions that undermine long‑term reliability.

These metrics are not just engineering trivia; they are governance signals. When a vendor cannot demonstrate strong MTTF/MTBF/MTTR profiles with clear explanations, they are implicitly admitting that their understanding of the system is shallow.

Outcome‑based, AI‑specific SLAs

Traditional SaaS agreements are often too vague for vibe‑coded, AI‑driven software. They are usually effort‑based (“respond within 4 hours”) and ignore the outcomes that matter to the buyer.

In the AI era, procurement must shift to outcome‑based SLAs that:

Tie availability and accuracy to actual system behaviour, not just ticketing statistics.

Impose penalties for hallucinated outputs, silent failures, or misattributed results.

Place explicit liability on the vendor for AI‑generated errors, not just human‑operator mistakes.

Equally important are data‑ownership and retraining clauses:

The buyer must retain ownership of both input data and AI‑generated outputs.

The vendor must not retrain models on the buyer’s data without explicit consent.

Inputs must be encrypted, isolated, and deleted upon contract termination to prevent “data leakage.”

These are not legal niceties. They are the minimum conditions for trustworthy AI‑driven procurement when the vendor is effectively packaging AI‑generated code behind a sleek interface.

The way forward

The way forward is not about banning vibe coding or demanding that every vendor “go back to manual coding.” It is about recognising that the speed and polish of a vibe‑coded demo are no longer reliable proxies for vendor credibility; they often conceal weak architecture, shallow ownership, and opaque failure modes.

Organisations that buy AI‑enabled software must now treat procurement as a governance‑aware, technical‑audit function. That means:

Looking past the policy‑as‑PDF and the “AI‑governed” slide deck to ask: Can you show me the AIRA‑style checks on this codebase?

Demanding measurable evidence of maintainability and resilience; refactoring ratios, duplication, security‑vulnerability density, MTTF/MTBF/MTTR, and MTTI, not just feature‑level demos.

Tightening contracts so that vibe‑coded systems are covered by outcome‑based, AI‑specific SLAs, clear data‑ownership terms, and explicit liability for AI‑generated outputs.

The most dangerous thing about vibe coding is that it is not a bad product. It is a credible illusion: a vendor that can articulate governance in slides and code a demo in minutes, yet cannot prove they truly own, understand, or can sustainably operate the system long after the LLM has stopped generating the “vibe.”